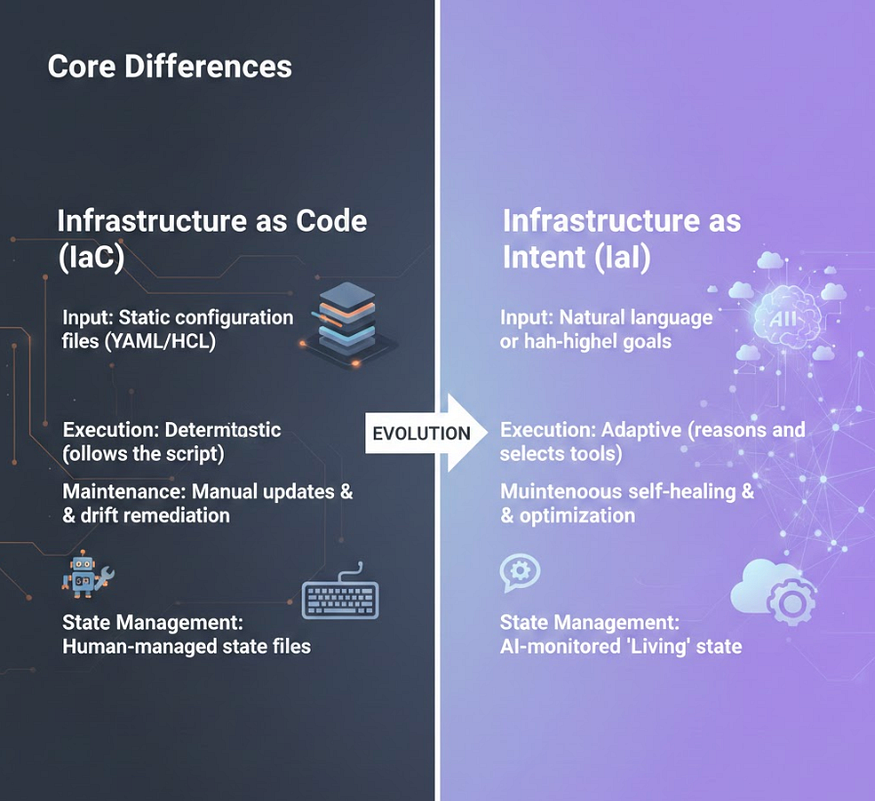

For over a decade, Infrastructure as Code (IaC) has been the gold standard for cloud management. We moved away from manual console clicks to declarative files like Terraform and CloudFormation. But even with IaC, we are still the “translators” who are taking business requirements and painstakingly converting them into hundreds of lines of YAML.

In 2025, the paradigm is shifting. We are entering the era of Infrastructure as Intent (IaI), powered by Agentic AI.

Table of Contents

- Why IaC Isn’t Enough Anymore

- Building an Agentic DevOps Loop

- The Future

- Additional Resources & Conclusions

Disclaimer: All views expressed are my own. This content is curated for readers with an introductory background in AI and Cloud computing.

Why IaC Isn’t Enough Anymore

While IaC made infrastructure repeatable, it didn’t make it intelligent. If a service experiences an unexpected traffic spike, a standard Terraform script doesn’t know it needs to scale. A human has to update the count variable and trigger a pipeline.

Infrastructure as Intent changes the relationship. Instead of telling the cloud how to build the recipe, you tell an AI Agent what you want to achieve.

Press enter or click to view image in full size

Created by Author

Building an Agentic DevOps Loop

A true DevOps Agent isn’t just a chatbot; it is a system with reasoning, memory, and tools. It uses a “Reasoning Loop” to interact with your cloud provider.

How it Works in Practice:

- Intent Processing: You tell the Agent “Deploy a secure staging environment in eu-west-1 that matches our production security profile.”

- Reasoning: The agent searches your documentation and existing “Production” state files to understand what “secure” means for your company.

- Action: It generates the Terraform, runs a “Plan”, and checks it against security policies.

- Observation: It sees a “Validation Failed” error because of an expired SSL certificate.

- Correction: It automatically requests a new certificate through AWS ACM and re-runs the deployment.

Code Example: This AI Agent has access to a mock Terraform tool and uses an LLM to decide how to use it.

import os

from langchain.agents import AgentExecutor, create_openai_functions_agent

from langchain_openai import ChatOpenAI

from langchain.tools import tool

# 1. Define the 'Tools' the Agent can use

@tool

def run_terraform_plan(description: str):

"""Generates and plans Terraform code based on a natural language description."""

# In a real app, this would call an LLM to generate HCL and then run 'terraform plan'

print(f"\n[Agent]: Analyzing requirements for: {description}")

return "Plan: 1 to add (s3_bucket), 0 to change, 0 to destroy. Security check: PASSED."

@tool

def apply_infrastructure(plan_id: str):

"""Executes a previously reviewed infrastructure plan."""

return f"Successfully deployed resources for {plan_id}. Endpoint: https://my-app.s3.amazonaws.com"

# 2. Initialize the "Brain" (LLM)

llm = ChatOpenAI(model="gpt-4-turbo", temperature=0)

# 3. Define the Prompt Template

from langchain_core.prompts import ChatPromptTemplate, MessagesPlaceholder

prompt = ChatPromptTemplate.from_messages([

("system", "You are an expert Cloud Architect Agent. Use tools to plan and deploy infrastructure."),

("human", "{input}"),

MessagesPlaceholder(variable_name="agent_scratchpad"),

])

# 4. Construct the Agent

tools = [run_terraform_plan, apply_infrastructure]

agent = create_openai_functions_agent(llm, tools, prompt)

agent_executor = AgentExecutor(agent=agent, tools=tools, verbose=True)

# 5. Run the Intent

agent_executor.invoke({

"input": "I need a new S3 bucket for the marketing team's assets in us-east-1. Plan it and then deploy it."

})

This Agent isn’t just following a static script. It is actively deciding which tool to call and evaluating the output of the “Plan” before moving to the “Apply” phase.

The Future

We are moving toward a future where “DevOps” becomes Platform Engineering for Agents. Instead of writing scripts, senior engineers will soon focus on:

- Guardrails: writing the policies that restrict what the AI Agent can do

- Context Injection: Ensuring the AI has the right “Memory” of the company’s architecture.

- Edge AI: Running these agents locally on private clouds to maintain data sovereignty.

Infrastructure as Intent does not replace the engineer; it replaces the toil. It allows us to move at the speed of thought, leaving the YAML wrangling to the machines.

Additional Resources & Conclusions

To see a real-world demonstration of how these agents are architected at scale, especially within the AWS ecosystem, this session from re:Invent 2025 is a goldmine for any cloud professional.

Like always, thank you for reading, and stay tuned for more content coming soon!

Comments

Loading comments…