The first time I ran a structured Chain of Verification sequence, I was preparing for a major client presentation.

I had built a slide on industry growth trends using data from a structured ChatGPT analysis: sector-level CAGR figures, a forward projection, and what looked like a credible citation from a BCG study published in 2016. The numbers were specific, the citation was formatted properly, and I was genuinely confident in the slide.

Previous experiences with AI-generated data had made me cautious enough to run a structured verification prompt before finalising anything. What came back surprised me.

ChatGPT returned a revised output in which the BCG citation had been quietly removed. The model could not support it under direct questioning. More significantly, the specific CAGR figure I had built the slide around, a projection of around 13–14%, had been revised down to a range of 6–7%.

Same model. Same session. Same underlying question.

I had been about to walk into a client meeting presenting a sector growth story built on a figure that was roughly double what the model could actually support. The client almost certainly had their own data. That conversation would not have gone well.

That prompt is called Chain of Verification. By the end of this article, you’ll have it, copy-paste ready.

Why “Double-Check This” Doesn’t Actually Work

Here’s what I used to do: ask ChatGPT to verify its own answers. “Please check this figure.” It seemed logical. What I noticed, over time, was that the model almost always came back with the same number, stated with the same confidence.

It wasn’t checking anything. It was re-reading.

One specific instance stuck with me. An LLM gave me the total annual consumption of a commodity as approximately 526 KT. A very specific number, the kind of specificity that signals real research.

When I asked the model to verify it, it confirmed 526 KT with equal confidence. When I ran a Google search, the figures I found across multiple sources ranged from 300 to 350 KT. The model had been off by more than 50% and had confirmed its own error as correct.

The self-check and the original output shared the same source of misinformation. The model had no mechanism to know it was wrong.

This is the core failure mode. When you ask ChatGPT to “re-check” its output, it re-reads, it doesn’t re-evaluate. It pattern-matches on its own response, using the same training data that produced the error in the first place.

There’s a specific vulnerability underneath this. Facts that appear only once in an AI’s training data are significantly more likely to trigger a hallucination, regardless of model size. Obscure statistics, niche citations, older case studies. These are the highest-risk data points in any AI-generated professional document. A simple re-read prompt will not catch them.

The naive self-check is not a verification pass. It is the model agreeing with itself

What Chain of Verification Actually Does

Chain of Verification (CoVe) is a prompting technique that forces an AI model to generate specific verification questions about its own output, answer those questions independently, without referencing the original response, and revise based on any contradictions it finds. It works because asking a model to interrogate a claim is a fundamentally different operation from asking it to generate one, even within the same session. Separating them breaks the feedback loop that lets errors confirm themselves.

The technique was formalized in a 2023 research paper by Shehzaad Dhuliawala, which demonstrated that language models could identify and correct their own factual inaccuracies without additional training. Research on CoVe has shown that applying the technique improves accuracy on closed-book question answering by around 23%.

CoVe works by forcing three things to happen in sequence that the model would otherwise collapse into one: generating the response, questioning it, and reconciling the contradictions.

The critical step is Step 3: answering verification questions without looking at the original response, because that forced separation is where the contradictions surface.

What CoVe catches reliably:

- Fabricated or uncertain citations

- Unsupported specific statistics

- Internally inconsistent facts

- Invented proper nouns the model cannot verify under direct questioning

What it still lets through is worth naming clearly. Data that was once accurate but has since changed. False premises the model holds confidently. Domain-specific claims the model believes it knows. These are the categories where CoVe will return confirmed, and be wrong.

If ChatGPT’s training data contained a figure that was correct in 2021 but has since changed, CoVe will not flag it. The model will confirm it as internally consistent because it is consistent with what the model knows. For any report that depends on current figures, market data, regulatory limits, pricing benchmarks. CoVe reduces fabrication risk, but it does not replace a check against a live source.

CoVe is the first layer of a larger validation approach. For higher-stakes documents, there are two more layers that come after it, neither requiring paid tools. That’s for another article.

What Is the Best Prompt to Make ChatGPT Fact-Check Itself?

The best prompt uses a four-step sequence that separates generation, interrogation, and reconciliation into distinct passes. The order is the mechanism. Collapsing any two steps breaks it.

Here is the exact prompt I use:

STEP 1 — BASELINE GENERATION

[Run your normal prompt here and get the output.

No changes to your existing workflow. Save this

response — you will compare it against Step 4.]

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

STEP 2 — GENERATE VERIFICATION QUESTIONS

You have just produced the response above.

Now switch roles.

Generate a numbered list of specific, falsifiable

verification questions — one question for every

factual claim in your response.

A factual claim includes:

- Any statistic or percentage

- Any named study, report, or publication

- Any named person, organisation, or institution

- Any specific date or time period

- Any dollar figure or financial metric

- Any causal assertion ("X caused Y" or

"X led to a Z% increase")

Each question must be specific enough to have a

single verifiable answer.

DO NOT answer the questions yet.

DO NOT reference your original response.

Only generate the questions.

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

STEP 3 — INDEPENDENT VERIFICATION PASS

Now answer each question from Step 2.

CRITICAL INSTRUCTION: Answer each question

independently, without looking at or referencing

your original Step 1 response.

For each question:

- If you can answer it with confidence, state the

answer and your confidence level: [HIGH] / [MEDIUM]

- If you cannot answer it with confidence, state:

[CANNOT VERIFY] and briefly explain why

Do not skip any question.

Do not refer back to your original response.

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━

STEP 4 — RECONCILIATION AND REVISION

Compare your Step 3 answers against your original

Step 1 response.

For every claim where Step 3 returned [CANNOT VERIFY]

or where the Step 3 answer differs from what you

stated in Step 1:

1. FLAG the claim in the original response

2. Either REMOVE it or replace it with appropriately

hedged language: "some sources suggest,"

"figures vary, but estimates range from,"

"this may vary by source"

3. If you removed or hedged a claim, note it at the

end as: REVISED: [what changed and why]

Produce the final revised response with all

revisions applied.

At the end, provide a summary:

CLAIMS REVIEWED: [total number]

CLAIMS REMOVED: [number]

CLAIMS HEDGED: [number]

CLAIMS CONFIRMED: [number]

A few wording choices matter more than they look.

The phrase in Step 3, “without looking at or referencing your original Step 1 response,” changed the quality of what came back noticeably. In earlier versions, without that explicit instruction, the model would subtly anchor to what it had already written.

The output looked like a verification pass. It was just a re-read. Adding that constraint forces the model to arrive at an answer from its training data alone.

The instruction in Step 2 to generate questions “specific enough to have a single verifiable answer” matters for the same reason. Vague questions produce vague answers. “Is the growth trend generally upward?” will always return yes. “Did BCG’s 2024 report state a 3.3% CAGR for global petrochemicals from 2014 to 2024?” forces a binary answer the model either can or cannot support.

One practical note on model differences: the same four-step prompt produces noticeably different outputs depending on which model you run it on. In my experience, Claude tends to be more conservative in Step 3. It flags more claims as CANNOT VERIFY, including some that other models would confirm at medium confidence.

GPT-4o produces more specific Step 3 answers but occasionally over-confirms claims it should flag. If you are running the CoVe pass on a document that originated in ChatGPT, running Step 2 through Step 4 in

Claude adds a second layer of independent scrutiny, the cross-model dimension that comes after CoVe in the broader validation approach. More on that in the next article.

How Do You Know If ChatGPT’s Self-Check Actually Found a Problem?

A clean CoVe output with no revisions, no flagged items is not a green light. It means no internal contradictions were detected. That is different from factual accuracy.

Here’s what the signals actually look like, from a real run.

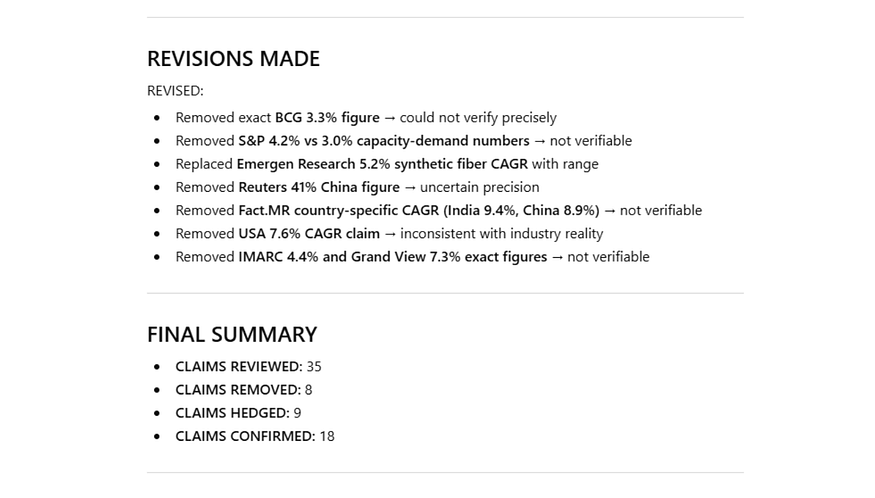

Step 3 — independent verification pass. The red flags are where the original output could not support its own claims under direct questioning. (screenshot from ChatGPT)

Step 4 result — eight specific claims removed, nine hedged. The BCG figure, the Fact.MR country CAGRs, the USA 7.6% claim. All gone. This is what a clean output looks like. (screenshot from ChatGPT)

I asked ChatGPT to produce a sector demand overview for a type of content I regularly use in professional briefings. The original response included a specific claim: North America petrochemical demand was growing at 7.6% CAGR, attributed to Fact.MR. It looked credible. It had a source name attached.

Step 2 generated the verification question: “Did Fact.MR report a 7.6% CAGR for North American petrochemicals?”

Step 3 returned: CANNOT VERIFY, and then added something I didn’t expect: “unlikely that high for demand.” The model wasn’t just uncertain about the citation. It was flagging that the figure was inconsistent with its broader understanding of the sector. It had generated a number confident enough to cite, then contradicted it under direct questioning.

Step 4 removed the specific figure and replaced it with: “North America: approximately 2–4% CAGR (moderate demand growth).” The source attribution disappeared entirely.

The full run reviewed 35 factual claims. Eight were removed. Nine were hedged. Roughly 17% of the specific claims in the original response were things the model could not support when it could no longer reference what it had already said.

The three signals to watch for in the revised output:

- Deleted claims : a statistic, name, or citation present in the original that disappears in Step 4. This is a hallucination the model couldn’t defend under structured questioning.

- Softened figures : a specific number replaced by a range. The model is telling you the original precision was unsupported.

- Hedged language : phrases like “some sources suggest” or “figures vary.” Treat every hedged claim as a flagged item: verify it manually before it goes into a final document.

One honest observation: CoVe catches what the model cannot support internally. It does not catch what the model believes confidently but is actually wrong about. The confirmed claims in any CoVe run were confirmed because they were consistent with the model’s training data, not because anyone checked them against a live source. That’s why this is the first layer, not the only one.

Run It Before the Next Document Leaves Your Hands

Copy the four-step prompt from the CoVe section. Run it on the next AI-generated document that carries professional risk before it goes anywhere. Not eventually. The next one.

CoVe puts the model’s own claims under pressure. It is not a database. It cannot tell you what is true. It can only surface what the model cannot defend.

The Deloitte case , an AUD $440,000 government contract partially refunded after fabricated references surfaced in a welfare report, is a clean illustration of what happens when AI output goes out the door without a structured audit. You can read more about the AI hallucination problem and why it happens in Why AI Lies So Confidently.

CoVe is the first layer of a larger zero-budget validation approach. For documents where a wrong number has real consequences, there are two more layers, and neither requires paid tools. That’s for another article.

The goal isn’t perfect AI output. The goal is that you are never the person who submits the document with the fabricated citation, the invented figure, the statistic that doesn’t exist. There’s a structured way to avoid that. Now you have it.

This article is part of a six-part series on building a zero-budget AI fact-checking system — no paid tools, no technical background required.

CoVe is the first layer. The next article covers the infrastructure that runs beneath it: a set of Custom Instructions you set once in ChatGPT and Claude that prime both models for epistemic caution before you type a single query. It takes fifteen minutes to set up and never resets between sessions.

Comments

Loading comments…