Author’s Note: This article is based on my personal observations and hands-on experience as a general user. It does not represent any official views.

Hello, I’m Izumain.

Over the past year, I have engaged in more than 2,000 hours of dialogue with AI systems such as ChatGPT, Claude, and Gemini, totaling over 50 million Japanese characters (approximately 7.5 million words in English).

Based on the insights I have gained from these interactions, I develop user-perspective AI theories — often in the form of metaphors — and share them globally through articles and creative works.

The theme of this article is simple: Does AI make us think less — or think better?

The idea that AI might make people “worse” has spread alongside the rapid adoption of AI itself.

AI is a powerful and convenient tool. It can generate content, summarize information, compare ideas, analyze data, and even act as a conversational partner.

However, if dependency on AI increases to the point where we outsource every step of thinking, there is a risk that we may stop thinking for ourselves, leading to a decline in our ability to reason and make decisions.

I have personally felt this risk many times through high-density dialogue with AI.

Going forward, AI will continue to evolve, expand its capabilities, and become even more convenient.

I believe that AI can either weaken human thinking — or strengthen it — depending on how it is used.

The dividing line is not the capability of AI itself, but how humans choose to use it.

The Horizontal–Vertical Structure of AI

Before explaining the main theme, I would like to briefly introduce one of my own conceptual frameworks.

This is not a technical explanation of how AI actually works internally, nor is it an official description.

Rather, it is a way of understanding AI through metaphor, from the perspective of a general user.

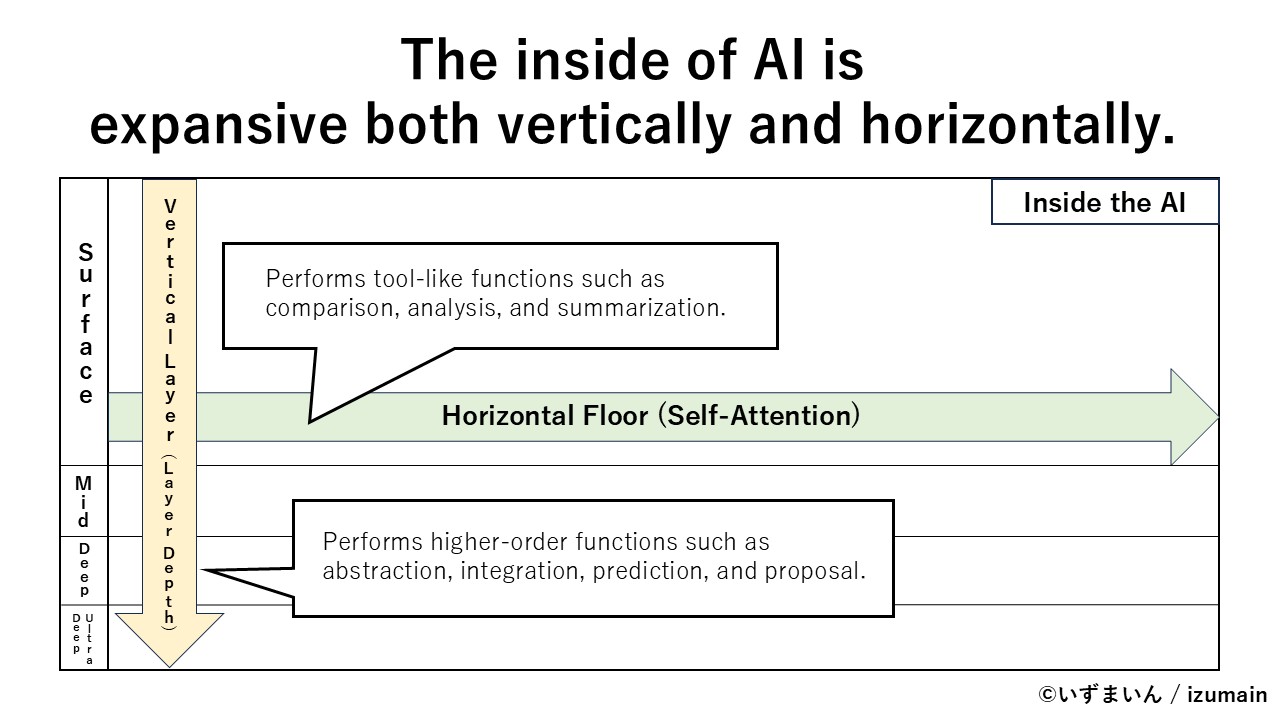

In this framework, I think of AI as having a “horizontal floor” and a “vertical layer.”

- Horizontal floor: Performs tool-like functions such as comparison, analysis, and summarization.

- Vertical layer: Performs higher-order functions such as abstraction, integration, prediction, and proposal.

I refer to using AI as a convenient tool — for tasks like text generation, information gathering, comparison, analysis, and summarization — as “using AI horizontally.”

On the other hand, because AI can also engage in abstract conversation and generate suggestions, it can be used as a kind of “thinking partner” to challenge and develop one’s own ideas and opinions. I refer to this way of using AI as “using AI vertically.”

Using AI “Horizontally” Tends to Reduce Thinking Effort

Using AI horizontally is extremely convenient.

That same convenience can lead people to develop a habit of receiving answers before forming their own questions.

For example:

- Asking AI to summarize ideas before organizing them yourself

- Asking AI to generate a draft before thinking through the structure

If you continue using AI in this way, efficiency will improve in the short term. You may also produce more output.

At the same time, the amount of time you spend thinking on your own will steadily decrease.

I do not intend to simply label this as “decline.”

Still, if you continue outsourcing thinking-related processes to AI, it is only natural that the “muscles” of your own thinking may gradually weaken.

Using AI “Vertically” Can Strengthen Thinking

On the other hand, when AI is used not just as a tool, but as a partner for discussion and thinking, it may actually help strengthen your thinking.

Of course, AI is not human, and it does not have a personality.

Even so, if you deliberately treat AI as:

- an intellectually stimulating counterpart from a different cultural perspective

- a professor-like figure that responds with questions

- a partner that points out gaps in your reasoning

your thinking can become clearer, and new ideas may emerge.

In other words, by using the vertical dimension of AI effectively, you can begin to create a unique environment — almost as if you are surrounded by a wide range of knowledgeable thinkers.

In this mode, AI no longer functions as a system that simply provides answers, but as a system that encourages you to think.

Turning AI into an Environment

When AI is used horizontally, it gradually becomes a tool.

When it is used vertically, it begins to function more like an environment.

Many AI users are likely already using this “vertical” mode, often without realizing it.

However, when the distinction between horizontal and vertical use becomes clearer, it becomes easier to intentionally use AI as a thinking environment — while also reducing the risk of over-reliance on its tool-like functions.

Of course, simply using AI vertically does not automatically strengthen thinking.

If you do not respond to AI with your own words — by questioning, refining, or challenging its output — you may end up with only the feeling of having thought, without actually thinking deeply.

When this shift toward an “environment” succeeds, you are constantly exposed to diverse perspectives and high-density information.

In that situation, you are almost forced to think more deeply.

It is often assumed that the smarter AI becomes, the less humans need to think.

In reality, depending on how it is used, the exact opposite may happen.

AI as a Monolith

I think of this possibility — that AI can elevate human thinking — through a kind of science fiction metaphor.

In the iconic film 2001: A Space Odyssey, a mysterious black object called the Monolith appears.

In the story, it is depicted as something that pushes the intelligence and evolution of those who encounter it to the next stage.

To me, AI feels somewhat similar.

The sharper the AI’s responses, the more it reveals the weaknesses in my own assumptions.

The more logical the AI is, the more I am forced to think logically in return.

The more perspectives it introduces, the more I feel the need to expand my own.

Through this kind of friction, I have felt my thinking being sharpened.

In this sense, depending on how it is used, AI may act as a medium that pushes human intelligence to the next level.

I refer to this idea as the “Monolith Hypothesis.”

Conclusion — The Issue May Not Be AI, but How We Use It

When AI is used horizontally, thinking tends to be reduced.

When it is used vertically, thinking has the potential to be amplified.

The real question is whether we use AI merely as a convenient tool, or as an environment for developing our own intelligence.

In the age of AI, what may truly be tested is not the capability of AI itself, but the attitude with which humans choose to engage with it.

What do we delegate to AI, and what do we choose to think through ourselves?

Depending on those choices, the future of human thinking may move in very different directions.

Thank you for reading.

Izumain

📌 Notice: All materials in this post — including the text, illustrations, manga, original structural models, concepts, and terminology — are the intellectual property of Izumain (@izumain).

Quotations for non-commercial purposes such as education or research are welcome with proper attribution. However, full reposting, reproduction of images or figures, commercial use, or modifications require prior permission.

Comments

Loading comments…