Every web scraping project follows a predictable pattern. It works well for the first few weeks. Then the Amazon Web Services (AWS) bill arrives. At that point, it becomes clear that modern web scraping is not only a coding problem. It is an infrastructure problem.

Reviewing 12 scraping projects on Reddit and GitHub, the pattern was consistent. An Instagram scraper costs $2,500 per month. A TikTok pipeline costs $5,000 per week. A LinkedIn tool required $40,000 per year in infrastructure. The code was free. The infrastructure was not. This article explains why web scraping costs scale rapidly and what approaches work in production.

The Pattern That Bankrupts Developers

Web scraping projects often follow the same cost pattern. A developer spends two to three weeks building a scraper. It works well on a local machine. The scraper extracts Instagram profiles, TikTok videos, or LinkedIn data. The code moves to production. Then, infrastructure costs increase significantly.

Recent data shows that 62.5 percent of web scraping professionals reported increased infrastructure costs, and 23.3 percent reported increases greater than 30 percent.

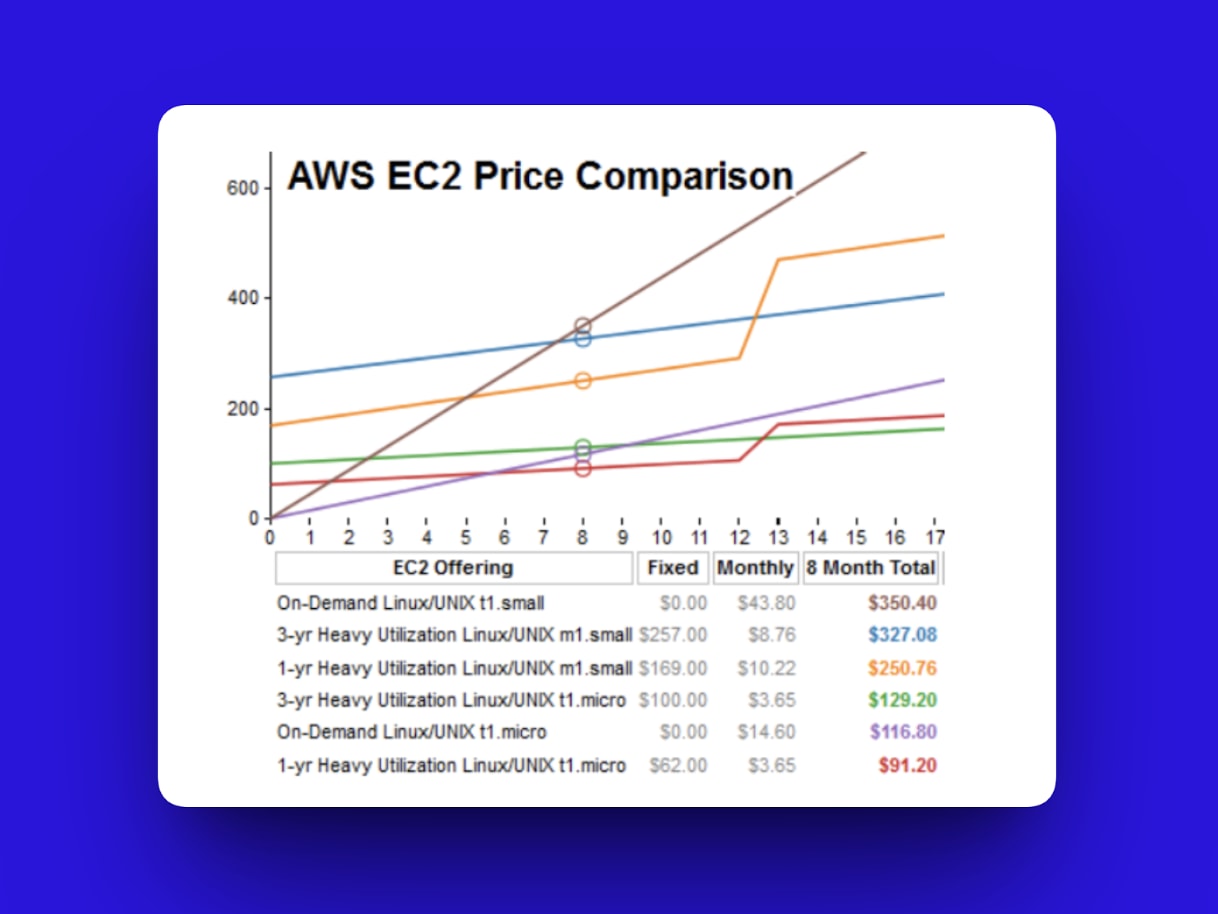

Image reference: AWS EC2 pricing comparison chart

A Reddit user built a TikTok search engine in 18 days. The first production scrape cost $2,500. The projected annual infrastructure cost was $60,000. In another example, a GitHub project scraped Instagram profiles at $0.40 each during testing. At 10,000 profiles, monthly costs increased from $40 to $2,000. LinkedIn tools that started on free tiers reached $40,000 per year after scaling.

These increases occur because development environments do not reflect production scale. Local testing might process 50 profiles in 10 minutes. Production might require 500,000 profiles per day. This scale requires continuous compute, proxy infrastructure, storage, retry systems, and monitoring. Code complexity increases linearly. Infrastructure costs increase much faster. This mismatch explains why scraping becomes expensive at scale.

Case Study Analysis

This section examines real-world projects and how infrastructure costs increased during production.

Case 1: TikTok search engine

A developer built a TikTok search engine using Python, Selenium, and headless Chrome. During testing, a single EC2 (Amazon Elastic Compute Cloud) instance extracted 1,000 videos per hour.

In production, TikTok blocked automated traffic. The developer added residential proxies at $15 per GB. Each request required full browser rendering. Storage reached 400 GB in the first month. Compute scaled to eight c5.2xlarge instances running continuously.

The first production scrape cost $2,500 for 50,000 videos. Monthly cost stabilized at $4,800. The annual projection was $57,600.

The developer spent four months optimizing the system. Improvements included switching to Puppeteer, improving proxy rotation, and reducing instance size. Final cost was $3,200 per month or $38,400 per year. The optimization required 320 hours of engineering time.

Case 2: Instagram profile scraper

A developer built an Instagram scraper using Puppeteer, Docker, and PostgreSQL.

Development ran locally at no cost. Production initially used a small EC2 instance and managed database, costing $43 per month. This supported 100 profiles per day.

Scaling to 10,000 profiles per day required major infrastructure changes. The developer added residential proxies at $50 per IP per month. CAPTCHA solving costs about $40 per month. Compute scaled to five instances. Storage reached 800 GB.

Final monthly infrastructure cost reached $1,847. Although the cost per profile decreased, the total cost increased significantly. The project was eventually discontinued due to infrastructure expenses.

Case 3: LinkedIn lead generation tool

A startup built a LinkedIn lead generation tool using Playwright and MongoDB. The initial infrastructure cost was minimal.

As LinkedIn increased detection, the startup added proxy services, browser fingerprinting, and additional compute resources.

The monthly infrastructure cost reached $3,400. Annual cost reached $40,800. The company eventually switched to a third-party API. This increased per-lead cost but eliminated infrastructure complexity.

The Common Thread

These projects shared the same pattern. Development was fast and inexpensive. Production introduced significant infrastructure costs.

Social platforms actively prevent automated scraping. This requires proxies, CAPTCHA solving, browser fingerprinting, and monitoring. These requirements increase cost beyond initial estimates.

The Hidden Cost Breakdown

Web scraping infrastructure costs typically fall into several categories.

- Proxy infrastructure accounts for 35 to 45 percent of the total cost. Residential proxies typically cost between $1.50 and $4.00 per GB. A scraper extracting 100,000 profiles per month might require 80 GB of proxy traffic, costing about $960 per month.

- Compute infrastructure accounts for 25 to 30 percent. Full browser automation requires significant memory and CPU. Multiple instances must run continuously to avoid rate limits.

- Storage accounts for 10 to 15 percent. HTML archives, structured data, and media files accumulate over time. Storage requirements often reach terabytes within months.

- Error handling and retries account for 8 to 12 percent. Failure rates between 10 and 20 percent increase total infrastructure usage.

- CAPTCHA solving accounts for 5 to 10 percent. Even low per-request costs increase significantly at scale.

- Monitoring accounts for 3 to 5 percent. Monitoring tools detect failures, blocking, and data corruption.

- Development time is often the highest hidden cost. Optimization can require hundreds of hours. This time represents a significant engineering expense.

Infrastructure Economics Reality

Infrastructure costs increase faster than scraping volume. Increasing scraping volume requires more advanced evasion techniques, more infrastructure, and more engineering time.

This is the central challenge of web scraping at scale. The primary constraint is not code. It is infrastructure economics.

Economies by Scale

There is a place for do-it-yourself (DIY) web scraping. There is also a place for combining DIY with third-party services. Finally, there is a place for fully relying on third-party services to handle complexity and bot detection.

The following 2026 breakdown compares industry leaders such as Bright Data, Oxylabs, and ScrapingBee with a standard DIY stack that includes Python, Playwright, infrastructure, time, and residential proxies.

| Scale | Managed API (Avg. Cost of Web Scraping Services /Profile) | DIY Stack (Avg. Cost/Profile) | DIY Breakdown Point |

|---|---|---|---|

| 100 | $0.015 ($1.50 total) | $0.00 + Time (2 - 4 hours weekly) | Negligible. Fine for manual research or hobbyists. |

| 1K | $0.002 ($2.00 total) | $0.015 (Proxies) + 8 – 12 hours for maintenance | You will spend more on a minimum residential proxy deposit ($15+) than the API costs. |

| 10K | $0.0015 ($15 total) | $0.008 (Proxies) + Infrastructure costs + 12 - 24 hours a month to repair breaks | IG's weekly UI/API changes mean you're spendingmore time per month on the repair code. |

| 100K | $0.0012 ($120 total) | $0.005 (Infra + Maint ) + 12 - 36 hrs/month + $540 -$720 monthly developer cost. | You now need TLS (Transport Layer Security) fingerprinting logic for secure communication in web scraping. Without it, failure rates hit 40%+. |

| 1M | $0.0008 ($800 total) | $0.004 (Heavy Operating Expenditure) + A full time (9am-5pm) $100k/year employee(s) + other unpredictable expenses | You need at least a dedicated $100k/yr engineer to effectively avoid web scraping defenses. |

The Optimization Trap

Developers often attempt optimization to reduce DIY web scraping costs. The following options are common.

- Serverless platforms: Services such as AWS Lambda are cost-effective and fast to deploy for small projects. Lambda offers one million free requests per month. However, when scrapers run continuously or process hundreds or thousands of pages, Lambda becomes more expensive than virtual machines.

- Virtual machines: Each virtual machine includes a dedicated IP address. Running multiple virtual machines reduces detection risk. However, you pay fixed CPU and RAM costs regardless of actual usage.

- Bare metal servers: These are dedicated physical machines that provide more computing power per dollar than virtual machines. However, they require upfront setup, ongoing maintenance, and fixed monthly contracts.

- Proxy management: Developers would instinctively consider using and rotating cloud IPs for web scraping tasks, but website rate-limiting infrastructure would limit the effectiveness of their scrapers. Purchasing data center proxies is the next affordable option, but if the site you want to scrape bans cloud IPs, data center IPs won’t be sufficient.

Then you move up to purchase residential proxies, which are often considered more effective at dealing with anti-bot sites, but they aren’t foolproof. If it fails, the next step is to use highly expensive mobile proxies, before resorting to the largest web scraping proxy tool: web unblocker services. Unless you know which proxy tool would be most effective for your project before starting, time and cost could be wasted before obtaining your ideal solution.

Build vs Buy Decision Framework

- Build phase: Building is effective for scraping 100 to 1K profiles if maintenance remains under two hours per month. Developer time has a direct cost. For example, at $100 per hour, maintenance quickly exceeds the cost of third-party services.

- Buy phase: When residential proxy costs reach $12 to $20 per month, third-party services become more cost-effective. For example, Bright Data offers a similar capability for about $15 per month, including infrastructure and maintenance. This phase typically begins above 10K profiles.

- Build and buy phase: Companies with web scraping expertise often adopt a hybrid approach. They maintain their own extraction logic and use third-party scraping browsers to avoid detection. DIY methods can handle public profile data, while third-party services handle complex targets such as follower lists. This approach is effective between 50K and 500K profiles. Total hybrid cost equals DIY expenses plus third-party expenses.

The final decision depends on your priorities. Test each approach and measure performance and cost. Many developers prefer building due to technical curiosity. However, third-party services reduce maintenance time and prevent cost overruns once scaling increases.

Conclusion

If you haven’t yet scaled a web scraping project, you have an advantage. You now understand that the real challenge is not writing extraction logic but managing the economics of scale. Avoiding the “$60K web scraping tax” begins with modeling infrastructure costs before traffic increases.

If you are already experiencing rising proxy bills, compute expenses, and growing maintenance overhead, the situation is not irreversible. The next step is to reassess your cost structure, quantify the value of engineering time, and determine where building stops being efficient and buying becomes the rational decision.

At higher volumes, most teams eventually reach a point at which third-party infrastructure is cost-effective. Testing a managed solution, such as Bright Data’s free trial allows you to evaluate performance and total cost before making a long-term commitment.

Web scraping does not fail because of code. It fails when economics are ignored.

Comments

Loading comments…