As an AI Engineer, I spend most of my days working with high-level frameworks such as PyTorch and TensorFlow, building neural networks that parse language or recognize images. But beneath the layers of abstraction, below the tensors and the activation functions, lies the foundational bedrock of all computing: bits.

Understanding how to manipulate these bits isn’t just a party trick — it’s a critical skill for optimizing low-level systems, writing custom CUDA kernels, and crushing Data Structures and Algorithms (DSA) interviews.

Today, we are going to dive deep into a classic bit-manipulation concept: the parity check. We will build our intuition, look at simple examples, and then tackle a heavy-hitting algorithmic question:

How would you compute the parity of a very large number of 64-bit words?

What is a Parity Check? (The Intuition)

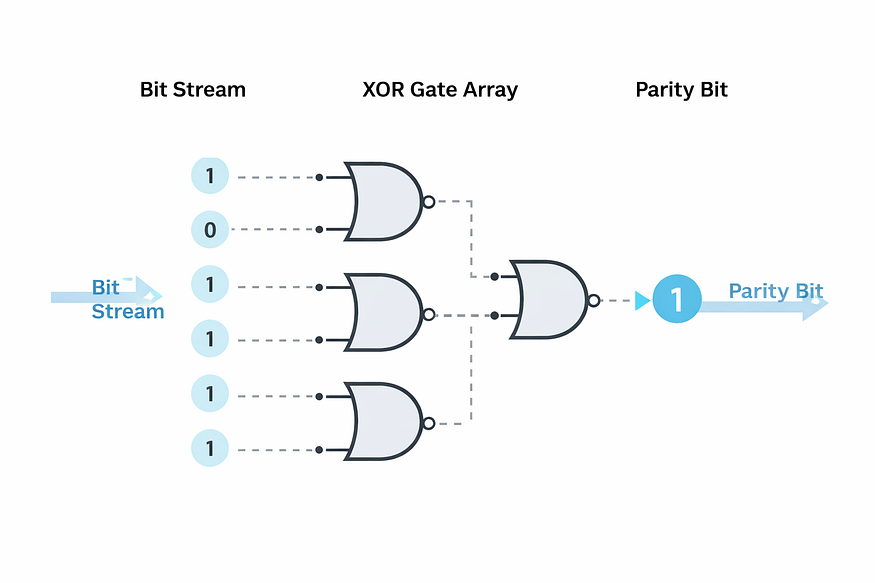

In the realm of data transmission and storage, bits can sometimes get flipped due to hardware noise or interference. To detect these single-bit errors, engineers invented the concept of parity.

The parity of a binary word is simply 1 if the number of set bits (1s) is odd, and 0 if the number of set bits is even.

Simply put: > * Odd Parity: The number of 1s is odd (Parity = 1).

- Even Parity: The number of 1s is even (Parity = 0).

A Quick Example

Let’s look at a few 8-bit integers:

10110101-> Contains five1s. The parity is 1 (Odd).10001000-> Contains two1s. The parity is 0 (Even).

When data is sent across a network, the sender calculates the parity bit and attaches it to the data. The receiver then recalculates the parity upon arrival. If the computed parity doesn’t match the sent parity, an error has occurred!

The Big Question

Now that we have the intuition, let’s tackle a classic technical interview question:

“How would you compute the parity of a very large number of 64-bit words?”

Notice the phrasing here: a very large number. This is a massive hint that while we need a fast algorithm for a single 64-bit word, we should also be thinking about caching or preprocessing to handle millions of queries efficiently.

Let’s walk through the evolution of the solution, from the naive brute force to the ultimate optimized approach.

Solution 1: The Brute Force Approach 🐢

The most straightforward way to compute the parity is to process the number bit-by-bit. We can check the least significant bit (LSB) using the bitwise AND operator (x & 1), update our parity, and then right-shift the number (x >> 1) until it becomes zero.

def parity_brute_force(x: int) -> int:

result = 0

while x:

result ^= (x & 1) # XOR the LSB with our result

x >>= 1 # Shift right by 1 to check the next bit

return result

- Time Complexity: O(n), where n is the word size (64 in our case). We have to do a shift and an XOR for every single bit up to the most significant set bit.

- Space Complexity: O(1).

While simple, this is too slow if we are processing millions of 64-bit integers. We need to do better.

Solution 2: Brian Kernighan’s Algorithm 🐇

What if we could skip the 0s and only process the 1s? Enter Brian Kernighan's algorithm.

In bit manipulation, the operation x & (x - 1) essentially erases the lowest set bit of x. We can use this trick to loop exactly as many times as there are 1s in the number!

def parity_kernighan(x: int) -> int:

result = 0

while x:

result ^= 1 # Flip the parity for every set bit we find

x &= (x - 1) # Drop the lowest set bit

return result

- Time Complexity: O(k), where k is the number of set bits (1s) in the word. In the worst case (all 1s), it’s still O(n), but on average, it’s significantly faster than brute force.

- Space Complexity: O(1).

This is a brilliant trick to keep in your arsenal, but for a 64-bit word with many set bits, we can still push the envelope further.

Solution 3: The XOR Fold (Word-Level Parallelism) 🐆

Here is where the math gets truly elegant. The XOR operator (^) is associative and commutative. Because we only care about the parity of the entire 64-bit word, we can fold the word in half, XOR the two halves together, and the parity of the resulting 32-bit word will be the same as the original 64-bit word!

We can repeat this folding process logarithmically: 64 bits → 32 bits → 16 bits → 8 bits → 4 bits → 2 bits → 1 bit.

def parity_xor_fold(x: int) -> int:

x ^= x >> 32

x ^= x >> 16

x ^= x >> 8

x ^= x >> 4

x ^= x >> 2

x ^= x >> 1

return x & 1

- Time Complexity: O(log n), where n is the word size (64). This requires exactly 6 shifts and 6 XOR operations, no matter how many bits are set!

- Space Complexity: O(1).

Solution 4: The Lookup Table (The Ultimate Boss) 🚀

If we are calculating the parity for billions of 64-bit integers, doing 6 shifts and XORs per integer adds up. What if we precomputed the parity for smaller chunks of bits and just looked them up?

We can create a cache (a lookup table) for all possible 16-bit integers. Since 2**16 = 65536, an array of this size easily fits into modern CPU caches (L1/L2 cache).

We can then split our 64-bit word into four 16-bit chunks, look up the parity for each chunk in O(1) time, and XOR the results together!

# Precompute the parity for all 16-bit numbers (0 to 65535)

# We can use our XOR fold function to build this cache quickly at startup.

PRECOMPUTED_PARITY = [parity_xor_fold(i) for i in range(1 << 16)]

def parity_lookup(x: int) -> int:

# A 64-bit number is split into four 16-bit chunks

MASK_16_BIT = 0xFFFF

# Extract each 16-bit chunk by shifting and masking

chunk1 = x >> 48

chunk2 = (x >> 32) & MASK_16_BIT

chunk3 = (x >> 16) & MASK_16_BIT

chunk4 = x & MASK_16_BIT

# Look up the parity of each chunk and XOR them together

return (PRECOMPUTED_PARITY[chunk1] ^

PRECOMPUTED_PARITY[chunk2] ^

PRECOMPUTED_PARITY[chunk3] ^

PRECOMPUTED_PARITY[chunk4])

- Time Complexity: O(n/L), where L is the width of our cached words (16 bits). Essentially, this resolves to O(1) operations per 64-bit word, making it insanely fast for large datasets.

- Space Complexity: O(2^L) to store the cache. For 16 bits, it’s just an array of 65,536 integers, which is trivial for modern machines.

Key Takeaways

- Understand the Basics: Parity is a foundational concept in error detection. Odd parity means an odd number of 1s; even parity means an even number of 1s.

- Know your Bitwise Operators:

x & (x - 1)is a magic trick for dropping the lowest set bit and is invaluable in DSA interviews. - Think Logarithmically: The XOR Fold approach demonstrates how we can process data in parallel within the word itself, reducing an O(n) scan to an O(log n) fold.

- Context is King: The phrase “a very large number of words” changes the optimal solution. In production environments (and senior-level interviews), caching and memory-time tradeoffs are the keys to ultimate performance.

Next time you are training an AI model or writing an algorithm, remember the humble bit. The most complex architectures on the planet rely on these hyper-efficient, low-level operations to function at scale.

What are your thoughts?

Have you ever used bit manipulation to optimize a bottleneck in your codebase? Let me know in the comments below!

If you found this article helpful, please give it a clap 👏, share it with your network, and follow me for more deep dives into Python, Data Structures, and AI Engineering!

Comments

Loading comments…