Anthropic recently announced “Claude Code Security” alongside specialised tools aimed at modernising legacy systems like COBOL. The market reaction was sharp. Investors clearly see this as more than just another minor feature release or a simple IDE extension.

We are seeing noticeable movement in the enterprise software space, with traditional security and modernisation vendors facing sudden scrutiny. But what does this actually mean for developers and security engineers on the ground?

Are we looking at the end of traditional static analysis, or is this just the next evolution of developer tooling?

What Was Actually Announced

To understand the industry reaction, we need to look at what Anthropic actually shipped. The announcement centred on an agentic approach to coding and security that lives natively in the terminal.

First, Claude Code aims to operate autonomously across an entire repository. It reads the file system, understands the build processes, and executes terminal commands. In the context of security, it claims the ability to identify vulnerabilities, trace their execution paths, and automatically generate and apply the necessary patches.

Second, Anthropic placed a massive emphasis on legacy modernisation. They introduced capabilities specifically tuned for translating decades-old COBOL codebases into modern languages. This involves analysing massive, often undocumented mainframe systems, understanding the underlying business logic, and mapping it to contemporary architectures without dropping constraints.

The goal here is not just auto-completion. The goal is stateful, context-aware execution.

Why Cybersecurity Tools Might Feel Threatened

Traditional cybersecurity tools, particularly Static Application Security Testing (SAST) platforms, have operated on the same fundamental principles for years.

They are deterministic. They rely on pattern matching, regular expressions, and parsing code into Abstract Syntax Trees (AST) to find known vulnerabilities. If a developer uses a risky function like eval() or dangerouslySetInnerHTML, the static analysis tool flags it based on a pre-defined rule.

This approach is highly reliable for finding known-bad patterns, but it is entirely context-blind.

A traditional scanner might flag a hardcoded string as a potential credential leak. It has no way of knowing that the string is actually a dummy variable injected exclusively during local unit testing. This lack of context creates the massive volume of “false positives” that security teams spend hours manually triaging.

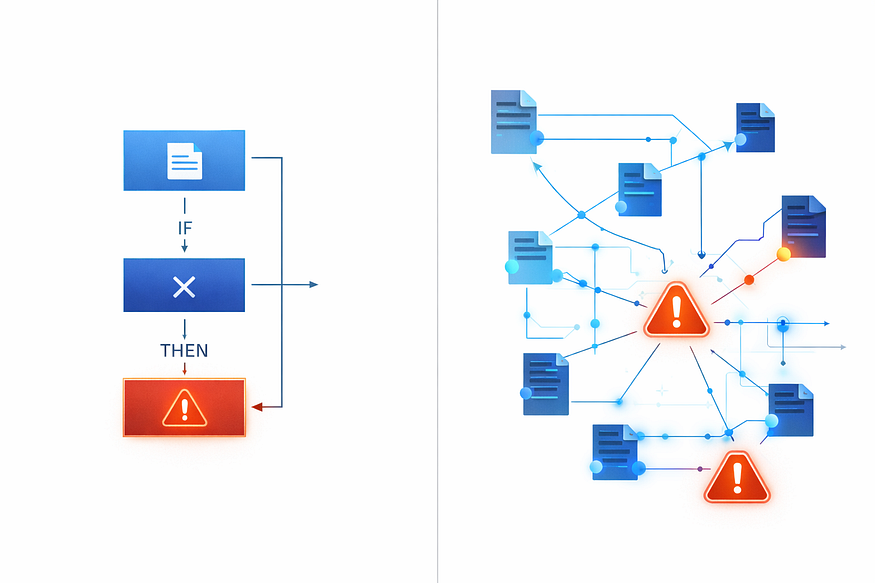

Deterministic pipelines vs Ai Agents: AI-generated Image by Author

AI agents change the layer at which security operates. An agent can reason about the context. It can see the hardcoded string, trace its import path, recognise that it is isolated to a test environment, and safely ignore it. Conversely, it can trace a seemingly benign data input across five different microservices and realise that it eventually hits a database query without sanitisation.

The real disruption isn’t that AI replaces scanners overnight. It is that AI moves security from a rigid, rule-based matching game to a semantic understanding of intent.

Are AI Agents Replacing Static Analysis?

It is easy to assume the legacy players are obsolete. The reality of enterprise software architecture tells a different story.

AI agents excel at understanding complex flows. They can explain a vulnerability in plain English, suggest the exact lines of code needed for a patch, and work seamlessly across multiple files to ensure a refactor doesn’t break external dependencies. They understand the why behind the code.

However, AI still struggles with deterministic guarantees.

When you are securing software that controls financial transactions or physical infrastructure, “probably secure” is not an acceptable standard. You need compliance-grade validation. Deep formal verification, where code is mathematically proven to be free of certain classes of bugs, is entirely outside the realm of Large Language Models.

LLMs hallucinate. They are probabilistic engines. A static analysis tool might be noisy, but it is mathematically consistent. If you feed it the exact same codebase one million times, it will give you the exact same result one million times. An AI agent might miss a vulnerability on a Tuesday that it caught on a Monday simply due to a slight variation in the prompt context window or inference temperature.

This means agents are not replacing static analysis. They are augmenting it.

The COBOL Modernisation Angle

The security implications are massive, but the COBOL modernisation aspect is where the economics of the industry truly shift.

Modernising a core banking system written in COBOL is notoriously difficult. The original developers have often retired. Documentation is either completely missing or severely outdated. The risk of breaking a system that processes billions of dollars a day makes most IT executives avoid the project entirely. They opt to keep the mainframe running at exorbitant costs.

AI changes the calculus of this risk.

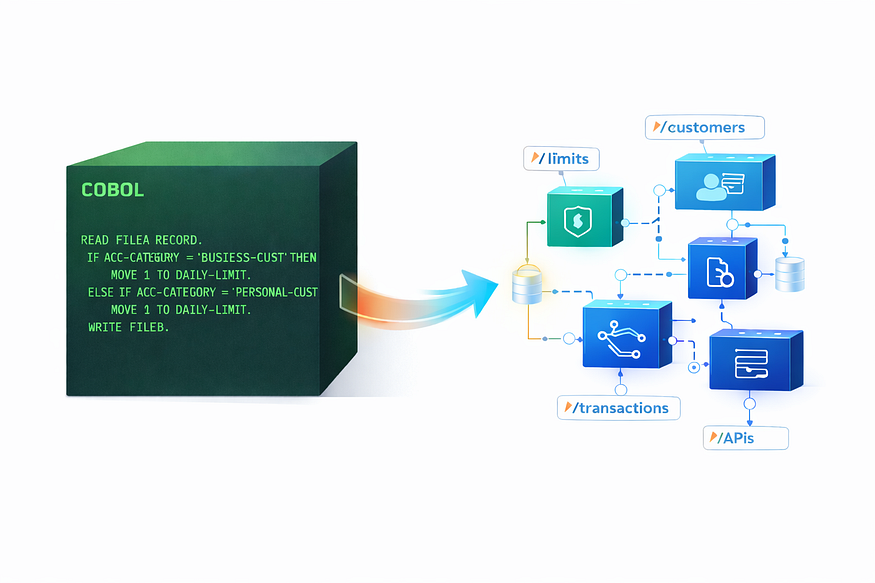

COBOL modernisation: AI-generated Image By Author

An agent can ingest a massive COBOL repository and explain the legacy logic. It can map out exactly how a specific financial batch process works, identify the obscure dependencies, and translate that logic into a modern architecture like a Node.js microservice. More importantly, it can generate the exhaustive test suites required to prove that the new microservice behaves identically to the old mainframe process.

AI doesn’t eliminate the risk of migration. But it reduces the friction and the required human capital enough to actually accelerate these migrations. Systems that were considered immovable objects are suddenly viable modernisation targets.

The Bigger Shift: AI as a Meta-Layer

The most accurate way to view this market shift is not as a replacement cycle, but as the emergence of a new meta-layer.

AI is becoming a supervisory layer over existing software categories. It sits above the deterministic tools, orchestrates them, and integrates across them.

Imagine a CI/CD pipeline. Instead of an AI trying to read one million lines of code to find a bug, the pipeline triggers a traditional, lightning-fast static analysis scan. The scanner finds 500 potential issues. The AI meta-layer then ingests those 500 alerts, traces the context for each one, filters out 480 false positives, and opens a pull request with suggested fixes for the 20 actual vulnerabilities.

This is where the real engineering is happening right now.

To demonstrate this, look at the Python snippet below. This is a simplified, yet structurally sound example of how an AI orchestration layer interacts with a traditional security tool. It runs a deterministic scan (Bandit), parses the JSON output, and uses an LLM to semantically verify the findings.

Press enter or click to view image in full size

AI Meta Layer: AI-generated Image By Author

# (Disclaimer: This is a simplified orchestration script for educational

# purposes. A production-grade pipeline would include robust error handling,

# async chunking for massive repos, and strict LLM output parsing via frameworks like DSPy or Instructor).

import subprocess

import json

import os

from openai import OpenAI

from pydantic import BaseModel

# Initialize the LLM client

client = OpenAI(api_key=os.environ.get("OPENAI_API_KEY"))

class VulnerabilityAnalysis(BaseModel):

is_true_positive: bool

explanation: str

suggested_fix: str

def run_deterministic_scan(repo_path: str) -> list:

"""Runs a traditional AST-based scanner and returns raw findings."""

try:

# Running Bandit to find security issues in Python code

result = subprocess.run(

["bandit", "-r", repo_path, "-f", "json", "-ll"],

capture_output=True,

text=True

)

output = json.loads(result.stdout)

return output.get("results", [])

except Exception as e:

print(f"Scanner failed: {e}")

return []

def analyze_finding_with_ai(finding: dict, code_snippet: str) -> VulnerabilityAnalysis:

"""Passes the deterministic finding to an LLM for semantic verification."""

prompt = f"""

You are a senior security engineer. A deterministic scanner flagged the following code.

Issue: {finding['issue_text']}

Severity: {finding['issue_severity']}

Code Snippet:

{code_snippet}

Analyze the context. Is this a true security vulnerability in a production environment,

or a false positive (e.g., test code, dummy data, safe internal usage)?

"""

response = client.beta.chat.completions.parse(

model="gpt-4o",

messages=[

{"role": "system", "content": "You are an expert application security reviewer."},

{"role": "user", "content": prompt}

],

response_format=VulnerabilityAnalysis,

)

return response.choices[0].message.parsed

def main():

target_repo = "./src"

print("Running deterministic scan...")

raw_findings = run_deterministic_scan(target_repo)

print(f"Found {len(raw_findings)} potential issues. Starting AI verification...")

for finding in raw_findings:

# Extract the specific lines of code based on the scanner's line numbers

code_context = extract_code_context(finding['filename'], finding['line_number'])

analysis = analyze_finding_with_ai(finding, code_context)

if analysis.is_true_positive:

print(f"\n[Verified Vulnerability] in {finding['filename']}")

print(f"Reason: {analysis.explanation}")

print(f"Fix: {analysis.suggested_fix}")

else:

print(f"\n[False Positive Filtered] in {finding['filename']}")

# Helper function omitted for brevity. It reads the file and returns surrounding lines.

def extract_code_context(filepath: str, line_number: int) -> str:

# ... file reading logic ...

return "example code snippet"

if __name__ == "__main__":

main()

What This Means for Developers

As a developer early in my career, currently spending my days diving deep into V8 internals and building applications from the ground up , this shift feels less like a threat and more like a massive signal.

There is a fear that AI will automate away the need for entry-level developers or specialised security analysts. From where I sit, it looks like the exact opposite. Security knowledge is becoming radically more accessible. Legacy systems, which used to be impenetrable black boxes reserved for grey-haired mainframe engineers, are becoming understandable.

The bar for developers is absolutely rising. Knowing how to write a simple CRUD endpoint is no longer a differentiator. The value is shifting toward architectural thinking, understanding how systems integrate, and knowing how to guide an AI agent through a complex refactor without letting it break the underlying data structures. Tooling is becoming AI-assisted by default, meaning we spend less time fighting syntax errors and more time wrestling with business logic.

What Happens Next?

The market reaction to Anthropic’s announcement is justified, but the timeline for actual disruption will be slower than the hype suggests.

Over the next year, we will likely see enterprise security tools integrate LLMs far more deeply into their core products. They will buy or build this meta-layer to maintain their relevance. We will also see a surge of AI-first security startups that build their entire architecture around the orchestrator model, treating traditional scanners as cheap, interchangeable commodities.

Enterprises will adopt hybrid workflows. The most critical, life-safety software will still require formal verification. The vast majority of B2B SaaS applications, however, will rely heavily on AI agents to manage security debt and handle routine patching.

Static analysis is not dead. The category is simply evolving.

The Real Shift

The real question isn’t whether AI replaces cybersecurity tools or modernisation firms.

It is whether security and architectural refactoring become an AI-native layer inside every single development workflow. If an agent lives in your terminal, understands your entire repository, and can predict the security implications of your code before you even hit commit, security ceases to be a separate, bolted-on process.

It becomes an ambient part of the environment. And that is a shift worth paying attention to.

Comments

Loading comments…