Photo by Milad Fakurian on Unsplash

Before we continue with agents in our series, let’s pause for a while to cover some essential concepts on prompting.

Chain of thought prompting is a technique used in LLMs to improve their ability to solve complex problems. It involves structuring prompts to encourage the model to break down the problem into smaller, more manageable steps and articulate these steps explicitly before reaching a conclusion.

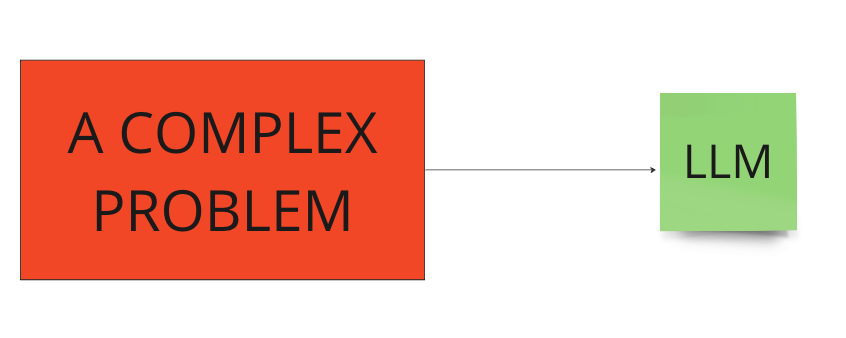

Presenting a complex problem to a LLM is not a good idea. Complex problems often involve multiple variables, hidden factors, and require a deep understanding of the context, which LLMs might not fully grasp.

Not a good method. Image by the author.

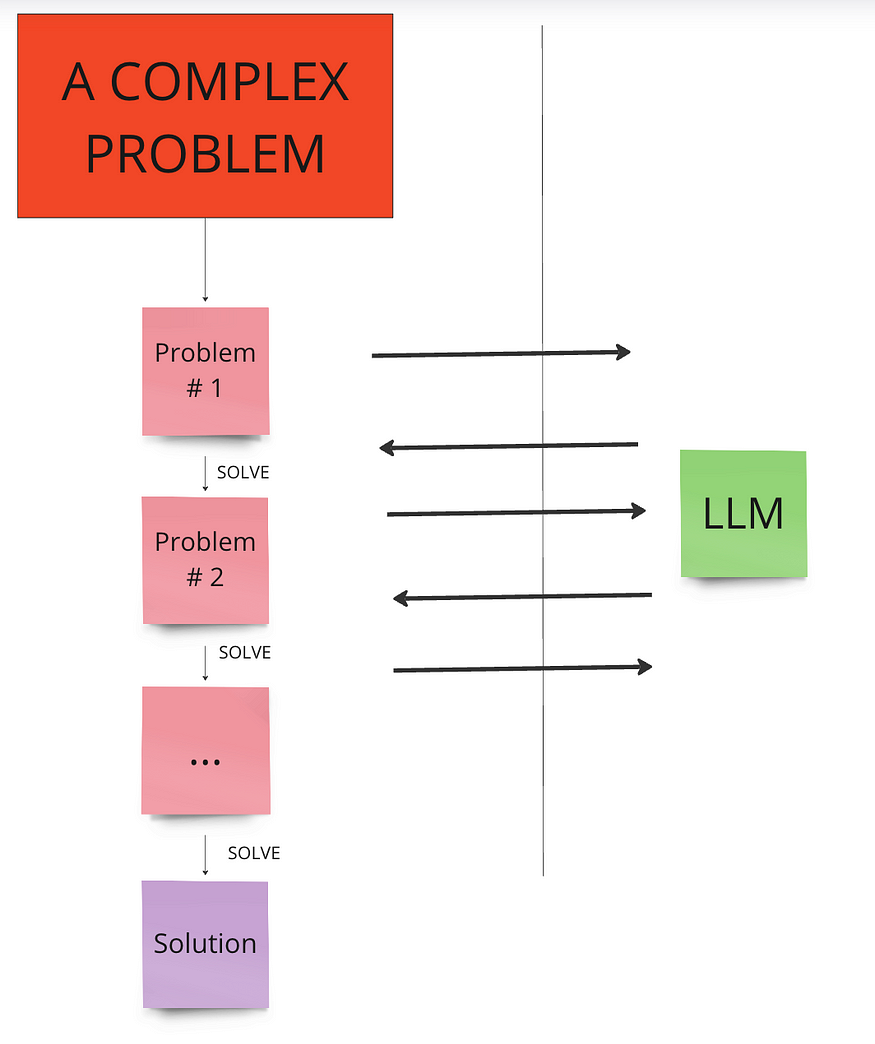

Instead, we can use the chain of thought prompting technique.

- Break down the problem: The prompt is structured in a way that guides the model to decompose the problem into a series of logical steps.

- Step-by-step reasoning: The model then processes each step individually, articulating the reasoning process that leads from one step to the next.

- Conclude: Finally, the model combines the insights from each step to arrive at a final solution.

Breaking into smaller problems. Image by the author.

Let’s make a simple example:

import os

from langchain_openai import ChatOpenAI

os.environ["OPENAI_API_KEY"] = "your-key"

# model

model = "gpt-3.5-turbo-0125"

llm = ChatOpenAI(temperature=0.1, model=model)

A problem encompasses numerous dimensions from popular culture:

Alex, a 35-year-old film enthusiast from Brazil, is watching a documentary about iconic movie soundtracks. He becomes captivated by a song from a film where a bride seeks revenge. Intrigued by the lead actress, he investigates her background and travels to her hometown. Once there, Alex’s attention shifts to the local sports culture. He learns about a famous basketball team known for its rich history and legacy. What is the second name of this basketball team?

Bang bang > Kill Bill > Uma Thurman > Boston > Celtics

Let’s give this problem as it is to the LLM:

problem = """

Alex, a 35-year-old film enthusiast from Brazil, is watching a documentary about iconic movie soundtracks. He becomes captivated by a song from a film where a bride seeks revenge. Intrigued by the lead actress, he investigates her background and travels to her hometown. Once there, Alex’s attention shifts to the local sports culture. He learns about a famous basketball team known for its rich history and legacy. What is the second name of this basketball team?

"""

query = f"""Problem statement:

{problem}

Can you solve this problem?

"""

llm.invoke(query)

"""

AIMessage(content='The second name of the basketball team known for its

rich history and legacy in the lead actress\'s hometown is likely the "Bulls."

This is a common nickname for the Chicago Bulls, a famous basketball team

with a rich history and legacy in the sport.')

"""

Since the basketball team was described as having a “rich history and legacy,” the LLM focused solely on this information and immediately identified Chicago as the most dominant and renowned basketball team.

Let’s try again:

cot_query = f"""Problem statement:

{problem}

First, list systematically and in detail all the problems in this problem

that need to be solved before we can arrive at the correct answer.

Then, solve each sub problem using the answers of previous problems

and reach a final solution.

"""

llm.invoke(cot_query)

"""

AIMessage(content='Sub-problems to solve:\n\n1.

Identify the film with a bride seeking revenge that features the song Alex

is captivated by.\n2.

Determine the lead actress in the film.\n3.

Investigate the background of the lead actress to find out her hometown.\n4.

Identify the hometown of the lead actress.\n5.

Shift attention to the local sports culture in the hometown.\n6.

Learn about a famous basketball team in the hometown known for its rich history

and legacy.\n7.

Determine the second name of the basketball team.\n\n

Solution:\n\n1.

The film with a bride seeking revenge that features the song Alex is

captivated by is "Kill Bill: Volume 1."\n2.

The lead actress in "Kill Bill: Volume 1" is Uma Thurman.\n3.

Uma Thurman\'s background reveals that she was born in Boston, Massachusetts,

USA.\n4. Boston, Massachusetts is the hometown of Uma Thurman.\n5.

In Boston, the local sports culture is dominated by basketball,

particularly the NBA team, the Boston Celtics.\n6.

The Boston Celtics are a famous basketball team known for their

rich history and legacy.\n7.

The second name of the Boston Celtics is "C\'s."')

"""

Here, the LLM was tasked with breaking down complex problems into smaller, manageable parts and solving each sequentially, using information from previous answers. It successfully arrived at the correct solution.

Let’s try another one:

take the last letters of the words in “New Hampshire” and concatenate them.

problem = """

take the last letters of the words in "New Hampshire" and concatenate them.

"""

query = f"""Problem statement:

{problem}

Can you solve this problem?

"""

llm.invoke(query)

"""

AIMessage(content='Yes, the last letters of the words in "New Hampshire" are

"w e e". When concatenated, they form the word "wee".')

"""

cot_query = f"""Problem statement:

{problem}

First, list systematically and in detail all the problems in this problem that need to be solved before we can arrive at the correct answer. Then, solve each sub problem using the answers of previous problems and reach a final solution.

"""

llm.invoke(cot_query)

"""

AIMessage(content='Sub-problems to solve:\n\n1. Identify the words in

"New Hampshire" that need to be considered for taking the last letters.\n2.

Determine the last letter of each word.\n3. Concatenate the last letters of

the words in the correct order.\n\nSolution:\n\n1. The words in "New Hampshire"

are "New" and "Hampshire".\n2. The last letters of each word are "w" and "e"

respectively.\n3. Concatenating the last letters in order gives us the final

solution: "we".')

"""

Next: LangChain in Chains #23: ReAct (Reasoning And Acting)

Comments

Loading comments…