At my company, we use the Amazon Web Services (AWS) infrastructure. We help organizations improve the efficiency of parking lots, and to do that we need to communicate with their computing systems. However, these organizations, which include hospitals and universities, often run closed private networks. Outside vendors like us may access those networks only through an IPSec-based VPN.

Is it possible to create an IPsec tunnel from an AWS Virtual Private Cloud (VPC) to a network outside of AWS? The use case that AWS supports well is connecting your own on-premises network with the VPC. Thus, in naming components, AWS uses the term “Customer Network” to designate your on-premises network. You are the customer of AWS.

Also, because you are the administrator of your on-premises network, AWS does not expose extensive logs that would allow you to troubleshoot the establishment of the IPSec tunnel. Instead, AWS assumes that you would be able to inspect the logs on the side of the on-premises network.

But what if you wished to create an IPSec tunnel from your VPC to a third-party network, one which is beyond your administrative control? In particular, you may not control the CIDR policy of the third-party network. A third party may require that you place your network on a specific CIDR, or that you use publicly addressable IP addresses.

In order to support creating IPSec tunnels, AWS offered, for many years, a specialized solution called the Virtual Private Network (VPN). In recent years, it supplemented it with a generic solution called the Transit Gateway (TGW). The VPN solution requires that the customer’s network doesn’t conflict with your CIDR. Unless you are willing to change the IP addresses inside your VPC to match the requirement, then you need to use Network Address Translation (NAT). This can be accomplished by creative use of the Transit Gateway (TGW).

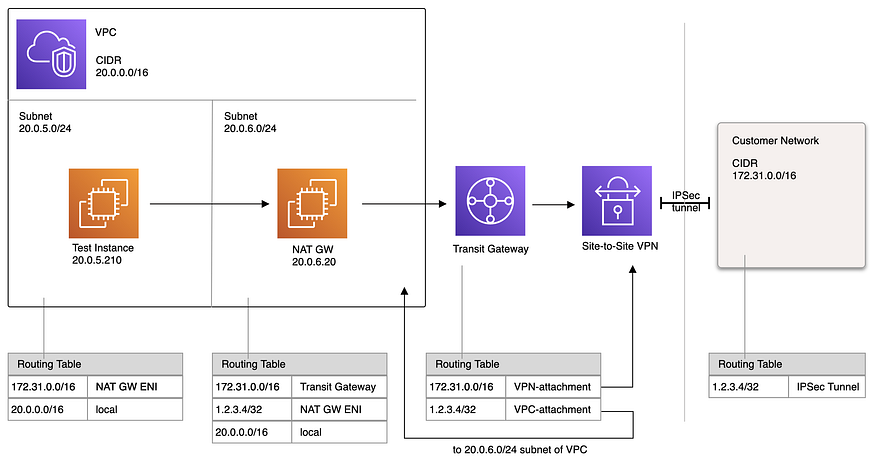

Below is the network layout that illustrates the key idea. Assume that the AWS VPC has the subnet 20.0.0.0/16, the customer's network is on the 172.31.0.0/16 subnet, and that the customer wants us to use a public IP subnet. (We use AWS' terminology of "customer" to refer to a third party.) But, instead of using a public IP subnet, we will use NAT to map all EC2 instances to the single Elastic public IP 1.2.3.4.

Inside our VPC we create a subnet 20.0.6.0/24 whose sole purpose is to contain a NAT gateway EC2 instance ("NAT GW" in the diagram) that would perform the NAT operation. Note that AWS has a built-in component called "NAT gateway," but here we run our own EC2 instance that performs this function using Linux and iptables packet filter.

The rest of the EC2 instances in our VPC live in separate subnets. The routing tables in those subnets forward packets that are destined to the customer’s side to the Elastic Network Interface (ENI) of the NAT gateway instance.

The subnet in which the NAT gateway lives has a routing table that forwards packets that are destined for the customer to the Transit Gateway. However, by this time, the source address of these packets is the public IP 1.2.3.4 because these packets have been now NAT-translated. Unlike the more basic Virtual Private Network AWS component, the Transit Gateway AWS component does not place any restrictions on the source address of the packet.

The Transit Gateway has a routing table that tells it where to send the packets further. Packets destined to the customer’s side are forwarded to the “Site-to-Site VPN” AWS component. This is the component that has all the IPsec tunnel options. In particular, it lists the end-point IPs on the AWS side and the customer’s side. Note that AWS allows only to specify the end-point IP on the customer side and automatically picks a public IP on its side. (We have no control of this IP address.)

If the Site-to-Site VPN component can establish the IPsec connection, then upon receiving the packets from the Transit Gateway, it would forward them through the tunnel. The customer would see 1.2.3.4 as the source IP of the packets and his routing table would instruct to send packets destined to the 1.2.3.4 IP back into the tunnel.

Returning Packets

So far we have discussed how the packets originating at a test EC2 instance in our VPC make their way to the customer’s side. Now let’s see the process in the opposite direction. Once the Site-to-Site VPN connection receives a packet destined for 1.2.3.4 IP address, it forwards it to the Transit Gateway (TGW). The routing table of the TGW tells to forward such packets to the particular subnet inside the VPC where the NAT GW instance runs.

Once the packet arrives in that subnet, a specific entry in the subnet’s routing table tells the subnet’s router to forward the packet to the Elastic Network Interface (ENI) of the NAT gateway instance. The NAT instance picks up the packet and de-NATs its destination back into the 20.0.0.0/16 network address space. Then, it dispatches the modified packet and AWS forwards it to the test EC2 instance.

Configuration of the Linux NAT instance

In order to make the NAT gateway instance to pick up packets that do not have its IP address in their destination header, AWS must be told to disable the “source/destination check.” This can be done in AWS Console EC2 “Instances” view like this: right-click on the instance to bring a popup menu, then select “Networking” → “Change source destination check” and disable the check.

Also, we must tell Linux to pick up the packets with this command:

$ echo 1 > /proc/sys/net/ipv4/ip_forward

Assuming that the local IP address of the NAT instance is 20.0.6.195, these iptables commands set up the NAT operation,

$ iptables -t nat -F

$ iptables -t nat -A POSTROUTING -d 172.31.0.0/16 -j SNAT --to-source 1.2.3.4

$ iptables -t nat -A PREROUTING -d 1.2.3.4 -j DNAT --to-destination 20.0.6.195

To handle additional customer networks we may add more SNAT lines. For example, for a customer network with CIDR 192.168.0.0/16 we would add this rule,

$ iptables -t nat -A POSTROUTING -d 192.168.0.0/16 -j SNAT --to-source 1.2.3.4

If there was another customer who wanted to see our instances coming from a different address space, then we would also add additional DNAT lines. For example, if a customer on network 10.0.0.0/16 wanted our traffic to appear as if it's coming from 10.1.0.0/16, then we could: (a) SNAT all outgoing packets to an IP address on that CIDR, for instance 10.1.0.1, and (b) DNAT them back to 20.0.6.195 upon return.

$ iptables -t nat -A POSTROUTING -d 10.0.0.0/16 -j SNAT --to-source 10.1.0.1

$ iptables -t nat -A PREROUTING -d 10.1.0.1 -j DNAT --to-destination 20.0.6.195

(In addition to updating the NAT rules, we would also need to update the AWS infrastructure as specified by the diagram: to add entries to subnet routing tables and to create additional Site-to-Site VPN connections and associate them with the Transit Gateway.)

Testing

We can test our setup by simulating a Customer network using an AWS tutorial to create a StrongSwan Linux VPN. We create another VPC to represent the “Customer’s” side and set its subnet to 172.31.0.0/16 CIDR. Then we follow the tutorial to create a StrongSwan Linux instance in it. We create another test EC2 instance in the same VPC and configure the routing table of the VPC to forward packets with destination 20.0.0.0/16 to the Elastic Network Interface (ENI) of the StrongSwan instance. Also, we assign a public IP to the StongSwan instance.

Returning back to our main VPC, we create a Site-to-Site VPN connection and set the public IP of the StrongSwan instance as the destination. We put the rest of the configuration as advised by the tutorial, and wait until the first tunnel shows that it is in the UP state.

The tutorial advises using Border Gateway Protocol (BGP) when creating a Site-to-Site VPN connection. Once the tunnel is set up, the StrongSwan instance would be automatically configured with 20.0.0.0/16 subnet thanks to the BGP. However, our packets will be arriving with 1.2.3.4 as the source address, so the BPG routing table would not know where to send the returning packets. To fix this, we connect to the StrongSwan instance and edit the configuration file /etc/quagga/zebra.conf for BPG daemon Zebra, to add a static route:

ip route 1.2.3.4/32 169.254.152.245

Then, we restart the BGP daemon with the service zebra restart command. Note that the IP address 169.254.152.245 in the above configuration line is the "Inside IP Address" of the Virtual Private Gateway of one of the two IPsec tunnels that the Site-to-Site VPN Connection created. You will have a different address, which you can look up from the Generic Configuration text file that can be downloaded from the Site-to-Site VPN Connection screen of the AWS console.

Next, we connect to the test EC2 instance in the 20.0.5.0/16 subnet and ping the test instance in the customer's 172.31.0.0/16 subnet,

[ec2-user@ip-20-0-5-210 ~]$ ping 172.31.38.197 > /dev/null & sudo tcpdump -eni any icmp

15:49:14.787108 Out ... 20.0.5.210 > 172.31.38.197: ICMP echo request, ...

15:49:14.854690 In ... 172.31.38.197 > 20.0.5.210: ICMP echo reply ...

We can also observe the traffic flowing through the NAT gateway instance:

ec2-user@ip-20-0-6-20:~$ sudo tcpdump -eni any icmp

20:39:46.084613 In … 172.31.38.197 > 1.2.3.4: ICMP echo request, id 26627, seq 1 ...

20:39:46.084657 Out … 1.2.3.4 > 172.31.38.197: ICMP echo reply, id 26627, seq 1 ...

The following helpful AWS CLI commands output all configurations for all Site-to-Site VPN connections. They include all tunnel parameters, particularly the secret Preshared Keys (PSKs).

$ aws ec2 describe-vpn-connections

$ aws ec2 describe-transit-gateways

$ aws ec2 describe-transit-gateway-attachments

Troubleshooting the IPSec tunnel

Unfortunately, AWS still has not created a way to debug the actual IPSec tunnel establishment. That is because AWS has not exposed any logs of this stage. The use-case that AWS aims to solve is connecting one’s own on-premises network with your own AWS VPC in the cloud. In such a case, we could debug the on-premises side of the IPsec connection. However, if the customer is a third party and the IPSec connect is failing, we are left at the mercy of the third party to debug the issue.

Terraform Configuration of the Transit Gateway

The following Terraform commands set up the Transit Gateway and help illustrate all of the settings further. For brevity, name tags and some other sections have been omitted.

First, we define the Transit Gateway and disable all the default routes.

resource "aws_ec2_transit_gateway" "example_transit_gateway" {

amazon_side_asn = 64512

auto_accept_shared_attachments = "disable"

default_route_table_association = "disable"

default_route_table_propagation = "disable"

description = "Example Transit Gateway."

vpn_ecmp_support = "disable"

}

Since we told AWS to not create a default route table for the TGW, we must create it by hand like this:

resource "aws_ec2_transit_gateway_route_table" "example_transit_gateway" {

transit_gateway_id = aws_ec2_transit_gateway.example_transit_gateway.id

}

The Transit Gateway has a split configuration of “routes” and “attachments.” The routes specify an attachment as the destination. We first create the attachment to the VPC subnet in which the NAT gateway EC2 instance lives (here named as private_subnet6 ),

resource "aws_ec2_transit_gateway_vpc_attachment" "nat_vpc_attachment" {

vpc_id = module.vpc.id

subnet_ids = [ aws_subnet.private_subnet6.id ]

transit_gateway_id = aws_ec2_transit_gateway.example_transit_gateway.id

transit_gateway_default_route_table_association = false

transit_gateway_default_route_table_propagation = false

}

Next, we add the route that tells the TGW to forward packets destined to 1.2.3.4 to the VPC subnet which we have just attached:

resource "aws_ec2_transit_gateway_route" "nat-egress-ip" {

destination_cidr_block = "1.2.3.4/32"

transit_gateway_attachment_id = aws_ec2_transit_gateway_vpc_attachment.nat_vpc_attachment.id

transit_gateway_route_table_id = aws_ec2_transit_gateway_route_table.example_transit_gateway.id

}

So far we have handled the returning of the packets. Now, let’s handle the forward direction. First, we define the Customer Gateway and the Site-to-Site VPN connection and then tell TGW to forward packets to it.

resource "aws_customer_gateway" "example_customer" {

bgp_asn = 64520

ip_address = '6.7.8.9' # this would be the public IP of the StrongSwan instance during test

type = "ipsec.1"

}

resource "aws_vpn_connection" "example_customer" {

customer_gateway_id = aws_customer_gateway.example_customer.id

transit_gateway_id = aws_ec2_transit_gateway.example_transit_gateway.id

type = aws_customer_gateway.example_customer.type

static_routes_only = false

}

resource "aws_ec2_transit_gateway_route_table_association" "example_customer" {

count=length(local.vpn_attachments)

transit_gateway_attachment_id = aws_vpn_connection.example_customer.transit_gateway_attachment_id

transit_gateway_route_table_id = aws_ec2_transit_gateway_route_table.example_transit_gateway.id

}

resource "aws_ec2_transit_gateway_route" "example_customer" {

count=length(local.vpn_attachments)

destination_cidr_block = "172.31.0.0/16"

transit_gateway_attachment_id = aws_vpn_connection.example_customer.transit_gateway_attachment_id

transit_gateway_route_table_id = aws_ec2_transit_gateway_route_table.account_transit_gateway.id

}

Terraform configuration of the custom NAT Gateway instance

The challenge in automatically configuring the NAT gateway EC2 instance is (a) to assign an Elastic public IP, and (b) to use the assigned private IP in the iptables rules. The solution is to first define an Elastic Network Interface (ENI) and then to use it in the definition of the instance:

resource "aws_network_interface" "nat_gw" {

source_dest_check = false # must be disabled for NAT to work

subnet_id = module.vpc.sn-private-nat-az1

security_groups = [ ... ]

}

resource "aws_eip_association" "nat_gw" {

network_interface_id = aws_network_interface.nat_gw.id

allocation_id = "1.2.3.4"

}

resource "aws_instance" "nat_gw" {

network_interface {

device_index = 0

network_interface_id = aws_network_interface.nat_gw.id

}

...

user_data = <<EOF

#!/bin/bash

echo 1 > /proc/sys/net/ipv4/ip_forward

iptables -t nat -F

iptables -t nat -A POSTROUTING -d 172.31.0.0/16 -j SNAT --to-source 1.2.3.4

iptables -t nat -A PREROUTING -d 1.2.3.4 -j DNAT --to-destination ${aws_network_interface.nat_gw.private_ip}

EOF

}

Security Considerations

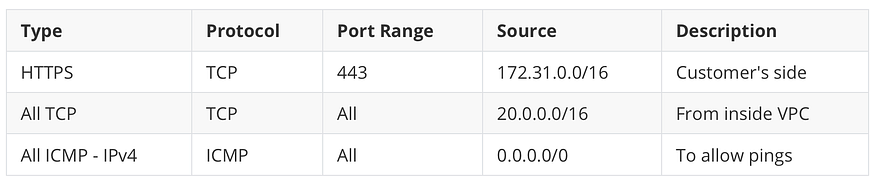

Because we are using NAT, no instance behind the NAT GW instance can be accessed from the customer’s network. However, the NAT gateway itself can be reached from the customer’s network. The ports which can be accessed are limited by the AWS Security Group of the subnet in which the NAT gateway lives. For instance, the following inbox Security Group rules would allow the customer’s side to only ping the instance and to make HTTPS requests to it:

Access can be also locked down by restricting the DNAT rule in iptables. Upon restricting it to the icmp protocol as shown below, the remote side would still be able to ping the NAT gateway at 1.2.3.4, yet would not be able to HTTPS into it.

iptables -t nat -A PREROUTING -d 1.2.3.4 -p imcp -j DNAT ...

Final Thoughts

We can get more mileage out of the Transit Gateway than from the older Virtual Private Network (VPN) AWS component. That is because the Transit Gateway is ambivalent about the source CIDR of the packets that it receives.

We hope that if more companies would use the TGW to connect to outside networks using NAT, then AWS would support this use-case directly in the Site-to-Site VPN settings so that there would be no need to maintain an EC2 instance to perform NAT. We also wish that AWS would expose Site-to-Site VPN logging of the IPsec VPN tunnel establishment to help with troubleshooting at that stage.

Comments

Loading comments…